MASK AS LOGIC

Reveal and Conceal in Brand Identity in the Generative AI Era

Mask as Logic explores how identity is constructed through what is revealed and what is concealed.

Traditional mask-making builds meaning through layers, with each decision shaping how the mask is understood by a specific audience. This project applies that same logic to brand identity in the age of AI.

Today, non-designers can instantly generate brand visuals. However, without a defined identity, these outputs often feel generic and interchangeable. The problem is not access to design, but rather the lack of structure to define meaning before generation.

This project introduces a system that translates human intuition into a structured identity that can be used consistently across AI tools.

Each year, millions of businesses start without access to professional design. Yet, identity determines whether they are noticed, trusted, or chosen. Although generative AI has made creating visuals fast and accessible, the problem has merely shifted rather than disappeared. AI produces outputs based on common patterns, resulting in brands that look polished but feel indistinguishable. While founders deeply understand their work, without a structured way to translate that understanding into design, they end up generating visuals that lack clear meaning before defining them.

Problem and objective

This thesis draws from the logic of masks, where identity is constructed through decisions about what to reveal and what to conceal. A mask is not decoration, but a system of controlled visibility, designed to communicate in a specific way to a specific audience. Brand identity operates similarly, as a structured set of signals rather than a collection of visuals. The challenge in the AI era is translating human intention, which is intuitive and emotional, into a form that machines can interpret without losing meaning. Maskit was designed as a translation layer to bridge this gap.

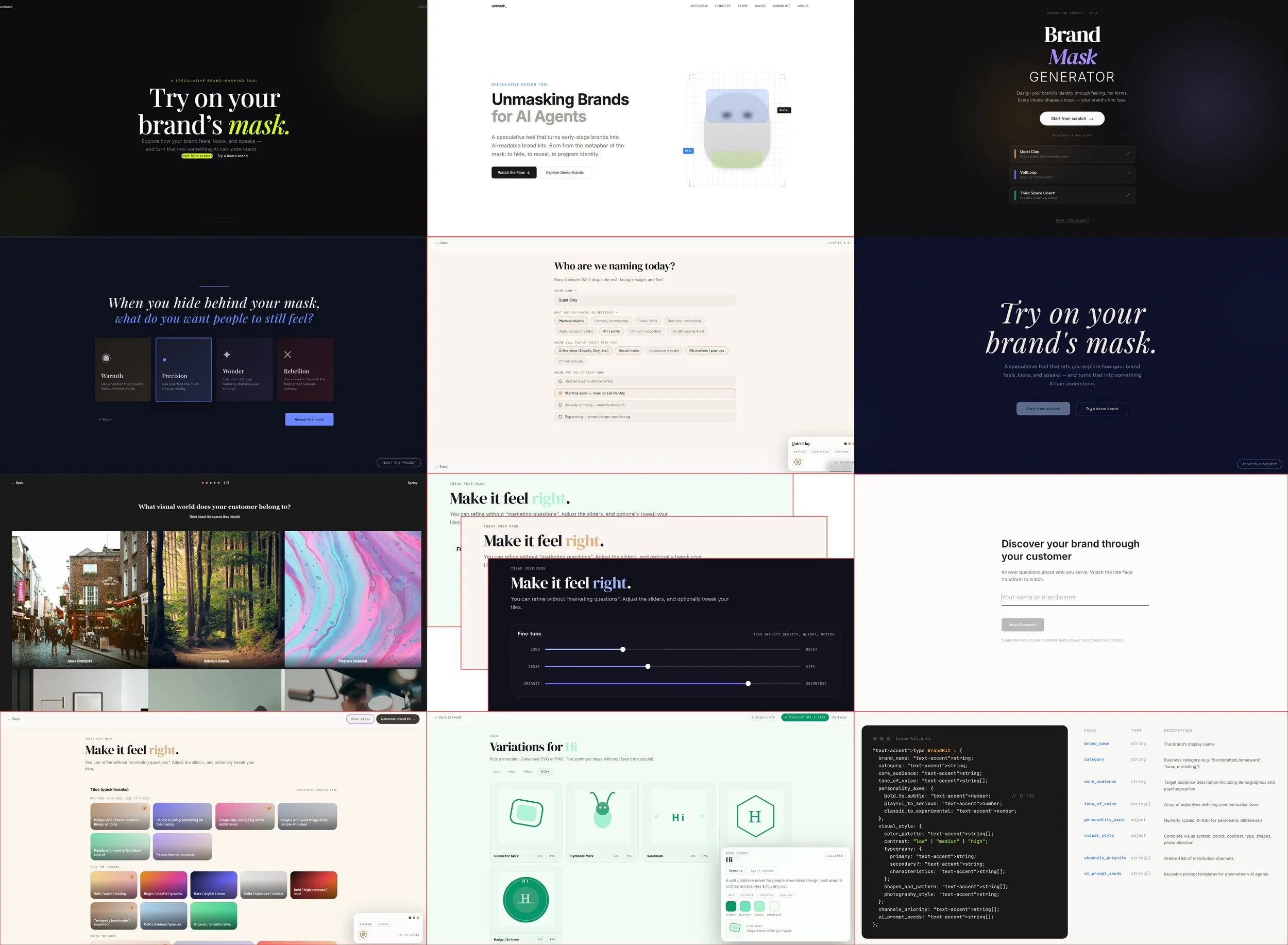

The MaskIt system works in two phases that transform intuition into structure. The first phase captures visual instinct through image-based selections, allowing users to define mood, color, typography, and spatial qualities without relying on design language. The second phase introduces questions about intention, audience, and values, refining and aligning the visual foundation with internal meaning.

System logic

All inputs are translated into parameters and processed through a logic system that maintains consistency while adapting tone and strategy, resulting in a structured brand kit that can be used across platforms and AI tools.

Testing with both designers and non-designers showed that while the system was intuitive and efficient, the outputs sometimes felt superficial and not fully aligned with the intended brand meaning. The process emphasized self-definition more than audience perception, and did not fully leverage AI’s ability to interpret inputs. In response, the system was refined to strengthen audience-focused questions, expand visual differentiation, and make the relationship between inputs and outputs more visible, shifting it from a tool that generates results to one that builds understanding.

Testing and iteration

Final Version

Phase 1: external visual landscape

Phase 2: internal identity

Outcome: brand kit generation

The next step is to integrate adaptive AI, allowing the system to respond dynamically to each input rather than relying on fixed questions. This project ultimately shifted my role as a designer from creating visual outcomes to constructing systems that produce them. In the context of generative AI, design becomes the logic that enables identity to move clearly between human intention and machine generation.